A single deployment window turned into a five-day firefighting operation. What Anthropic characterized as “code quality issues” in their January 2025 postmortem translated to broken integrations, degraded performance, and a cascade of customer complaints that forced the AI company to roll back features shipped just days earlier. The incident wasn’t a catastrophic failure—no data breach, no system meltdown—but something potentially more revealing: a preview of what happens when velocity becomes the only metric that matters.

The pattern emerging across frontier AI labs resembles nothing so much as the subprime mortgage crisis, where individual actors making locally rational decisions created systemic fragility no one entity could control. Ship fast, gather data, iterate—the mantras that built Silicon Valley now generate what engineers are beginning to call AI safety debt. Like financial leverage, it multiplies gains until it multiplies losses. Unlike financial leverage, there’s no regulatory framework forcing disclosure of how much has accumulated.

Anthropic’s stumble matters less for what broke than for what it illuminates about an industry-wide gamble. The company’s own safety-focused positioning made this incident a particularly stark example, but the underlying dynamics—pressure to match competitor release cycles, internal processes that can’t keep pace with capability advances, testing regimes designed for yesterday’s models—are universal. Every major lab faces the same arithmetic: each month of delay costs market share, but each corner cut compounds risk in ways that won’t show up in metrics until much later.

When Testing Can’t Keep Up With Training

OpenAI ships a major update every three months. Google DeepMind runs on similar timelines. Anthropic, despite its deliberate positioning as the safety-conscious alternative, found itself caught in the same compression. The postmortem revealed what practitioners have suspected: comprehensive testing frameworks designed for models with bounded capabilities break down when those bounds keep expanding. A test suite that covered 95% of failure modes in June covers 73% by December, not because the tests got worse, but because the capability surface grew.

Numbers tell the acceleration story. GPT-3 took roughly two years from internal development to broad release. GPT-4’s gap narrowed to approximately one year. Industry observers tracking frontier model releases note the pattern: each generation compresses the timeline from capability breakthrough to customer deployment by 30-40%. The testing infrastructure, meanwhile, grows linearly at best. It’s the equivalent of doubling highway speed limits every year while road safety inspections stay on the same monthly schedule.

| Model Generation | Development to Release | Known Issues at Launch | Post-Launch Critical Updates |

|---|---|---|---|

| GPT-3 (2020) | ~24 months | Documented in papers | Infrequent |

| GPT-4 (2023) | ~12 months | Some documented | Quarterly |

| Claude 3.5 (2024) | ~6 months | Discovered post-launch | Monthly+ |

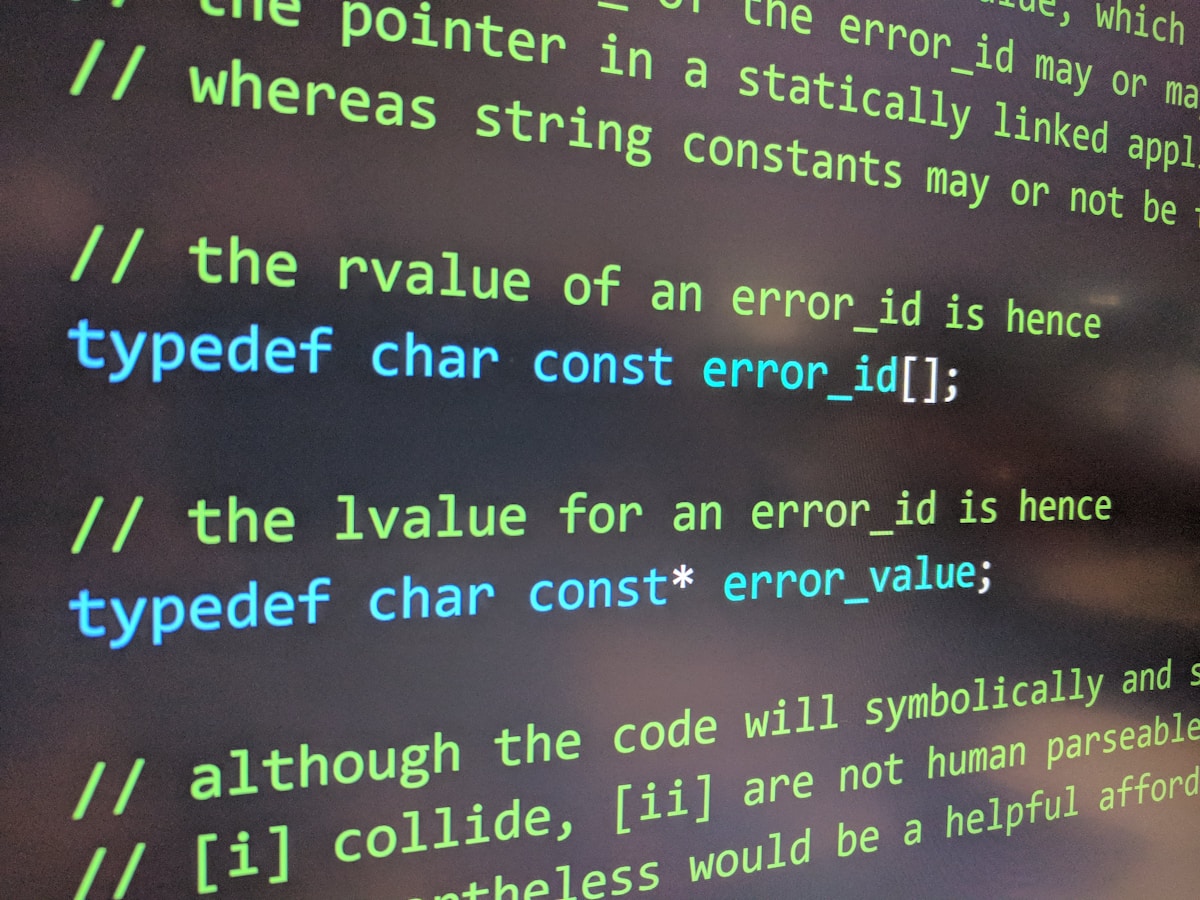

The compression creates a knowledge gap that no amount of computational power can close. Safety testing relies on red-teaming, adversarial probing, and systematic exploration of edge cases—all fundamentally human-speed activities. You can’t throw more GPUs at the problem of imagining what might go wrong. Anthropic’s incident emerged precisely at this friction point: features developed at machine speed, validated at human speed, then deployed at market speed.

The Denominator Problem

Failure rates that look acceptable in isolation become catastrophic at scale. Anthropic’s code generation issues affected what the company described as “a subset of use cases”—industry shorthand for double-digit percentage impacts. When that subset is drawn from millions of daily queries, the absolute numbers balloon. A 5% failure rate sounds manageable until you’re debugging 50,000 broken integrations.

Scale transforms the math of acceptable risk. Early-stage startups can afford a “move fast and break things” posture because the denominator—total users, total interactions, total dependencies—stays small. Frontier AI labs now serve enterprise customers where a single model integration might touch hundreds of downstream systems. The blast radius of a bad deployment no longer measures in annoyed users but in broken supply chains, halted medical research, or corrupted financial models.

AI safety debt accumulates in this denominator. Each new capability, each expanded use case, each additional integration point increases the number of ways the system can fail. The debt isn’t in the code itself but in the widening gap between what the system can do and what the creators understand about how it will be used. Anthropic’s engineers likely tested Claude’s code generation extensively—against known benchmarks, standard libraries, common patterns. They couldn’t test against the infinity of ways customers would chain these capabilities together in production environments.

Mortgage-Backed Prompts

The financial crisis analog runs deeper than metaphor. Banks securitized individual mortgages into complex instruments where risk became impossible to assess. AI companies are effectively securitizing uncertainty—packaging capabilities whose failure modes are poorly understood into products deployed across critical infrastructure. The difference: mortgage originators eventually faced margin calls. AI safety debt comes due on an unknown schedule.

“We’ve built evaluation systems that tell us a model can write Python. What we haven’t built is systems that tell us what happens when ten million people use that Python-writing capability in ways we never imagined, all at once.”—Former safety researcher at a frontier AI lab

This uncertainty creates perverse incentives. Companies racing to market can externalize AI safety debt onto customers, who discover limitations only through costly production failures. The competitive dynamic rewards whoever ships first and apologizes later, because by the time “later” arrives, you’ve already captured market share. Anthropic’s relatively quick postmortem and rollback might represent best-in-class incident response—which should be alarming, not reassuring, about industry baseline practices.

February becomes the new December in this cycle. If Anthropic, with its explicit safety mission and research pedigree, ships features that require rapid rollback, what’s happening at labs where safety is a nice-to-have rather than a founding principle? The incident suggests that AI safety debt isn’t being managed differently by the “responsible” players—it’s accumulating everywhere, just being disclosed differently.

The Six-Month Horizon

Two trajectories are diverging. Model capabilities continue their exponential climb—current generation models demonstrate reasoning and coding abilities that would have seemed implausible three years ago. Safety infrastructure advances linearly, constrained by the human-speed work of imagining failures, building tests, and validating approaches. The gap between these lines is AI safety debt, and it’s growing.

Six months from now, this gap will force a reckoning. Either labs slow down—accepting competitive disadvantage to rebuild testing and safety infrastructure—or incidents like Anthropic’s become routine background noise. The optimistic scenario involves customers demanding better, possibly through contract terms that penalize instability. Enterprise buyers who’ve now experienced the sharp edge of rapid deployment might start negotiating SLAs that include safety provisions, creating market pressure for more conservative release cycles.

The pessimistic scenario looks like normalization. If every lab ships broken features monthly, none of them face competitive penalty for doing so. AI safety debt becomes like technical debt in consumer software: everyone knows it exists, everyone agrees it’s bad, and everyone keeps accumulating it because the incentives haven’t changed. The industry settles into a rhythm of perpetual beta, where “postmortem” becomes a regular content category rather than a crisis response.

Regulation might force the issue, though current proposals focus more on capability guardrails than deployment practices. Existing software safety regulations weren’t designed for systems that improve continuously and fail in emergent ways. Aviation-style incident reporting could help—mandatory disclosure of safety-critical failures, shared learning across labs—but requires industry cooperation or regulatory mandate, neither of which appears imminent.

When Debt Comes Due

The bill arrives not as a single catastrophic event but as a rising cost of doing business. Companies will hire larger red teams, build more elaborate testing infrastructure, slow release cycles—all catching up to where they should have been before acceleration began. AI safety debt, like technical debt, charges compound interest. Every month it accumulates makes the eventual paydown more expensive.

Customers are already pricing in this risk. Enterprise contracts now routinely include AI-specific liability clauses and performance guarantees that didn’t exist two years ago. The more incidents like Anthropic’s occur, the higher this insurance premium climbs, until the cost of managing safety debt post-deployment exceeds the cost of preventing it pre-deployment. Market forces will eventually enforce what best practices currently recommend.

The question isn’t whether AI safety debt gets addressed but how much accumulates before it becomes unbearable. Anthropic’s incident represents a small tremor, not the earthquake. But tremors predict larger seismic events. Somewhere in the gap between what frontier models can do and what their creators understand about deployment at scale, a more consequential failure is accumulating probability mass.

FetchLogic Take

Within 18 months, at least one frontier AI lab will implement a mandatory “safety sprint” between capability development and customer deployment—not from regulatory pressure but from investor demand following a high-profile enterprise customer defection. The trigger will be a Fortune 500 company publicly migrating away from a frontier model after a production incident, citing safety infrastructure inadequacy in their announcement. This will create the first market-driven forcing function for AI safety debt reduction, proving more effective than any policy proposal currently under discussion. The lab that implements comprehensive safety infrastructure first won’t sacrifice market share—they’ll command a premium.

AI Tools We Recommend

ElevenLabs · Synthesia · Murf AI · Gamma · InVideo AI · OutlierKit

Affiliate links · we may earn a commission.

Related Analysis

The Patient Who Wasn’t in the Room: Who Bears the Cost When AI Medical Diagnosis Outperforms DoctorsMay 3, 2026

The Patient Who Wasn’t in the Room: Who Bears the Cost When AI Medical Diagnosis Outperforms DoctorsMay 3, 2026 Spotify’s ‘Verified Human’ Badge Bets on an Assumption That May Not HoldMay 2, 2026

Spotify’s ‘Verified Human’ Badge Bets on an Assumption That May Not HoldMay 2, 2026 AI Data Centers Use 25% Less Water Than Utilities Admit-Here’s Why the Narrative MattersMay 2, 2026Anthropic’s Kill Switch: How Claude Code Now Blocks Competitors by NameMay 1, 2026

AI Data Centers Use 25% Less Water Than Utilities Admit-Here’s Why the Narrative MattersMay 2, 2026Anthropic’s Kill Switch: How Claude Code Now Blocks Competitors by NameMay 1, 2026